Professors Sylvain Hallé and Hugo Tremblay recently published a paper at the Computer Aided Verification (CAV) conference, held virtually in July 2021. The CAV conference is one of the best in the field of software verification.

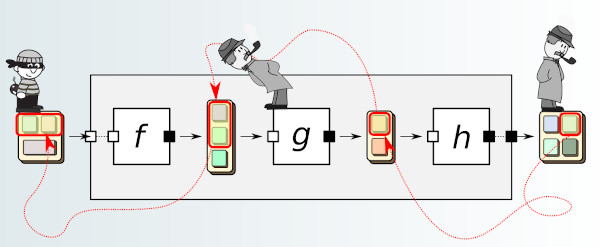

The article, titled Foundations of Fine-Grained Explainability, defines the mathematical basis of a concept called “explainability”. Informally, explainability can be seen as the way of relating the output of a calculation or operation to the elements of the input that contributed to its production. The peculiarity of their contribution consists in the fact that the proposed explainability relation is said to be of fine granularity: it is possible to point to a part of the output (for example, a character in a text, an event in a sequence or a cell in a table), and trace the specific parts of the entry associated with it.

The theoretical notions put forward have also been implemented in a free software library called Petit Poucet, referring to the character in Charles Perreault’s tale who left pebbles behind to find his way through the forest. Ultimately, these concepts could find applications in many fields: we could thus automatically calculate the reason why an operation produces a certain value from the input data, or even “explain” why a condition on a system does not is not respected.

You can see below the video of the presentation given by Sylvain Hallé during the conference.

Recent Comments